AI fluency is the ability to work effectively alongside AI tools. At Railsware, we define it through four positive behavioral patterns: Effective application, critical validation, ownership & accountability and ethics awareness. It sits inside our competency model as a horizontal capability that applies across every role, from engineering to recruiting to accounting.

This post explains what we mean by each of the four patterns, how they show up in day-to-day work, how they scale with seniority, and how we assess them in hiring.

We’ve been building products at Railsware since 2007. When AI tools crossed the threshold for real product work in 2025, we didn’t add “AI” as a perk or a training initiative. We added it to the competency model that helps us choose the best candidates and supports our teams’ professional growth.

In this article, we’ll explore why we made AI part of our competency model and what that looks like in practice.

TL;DR

- AI fluency is not the same as AI literacy. Literacy is knowing what AI is. Fluency is using it with judgment in your actual work, knowing when it helps, when it doesn’t, and what it costs.

- We define AI fluency as four observable behaviors. They map cleanly to the four parts of working with a probabilistic tool: framing the task, choosing what to delegate, judging the output, and owning the result.

- It sits in the horizontal bar of our competency model. Every role at Railsware includes it, but the way an engineer demonstrates fluency differs from how a recruiter or accountant does.

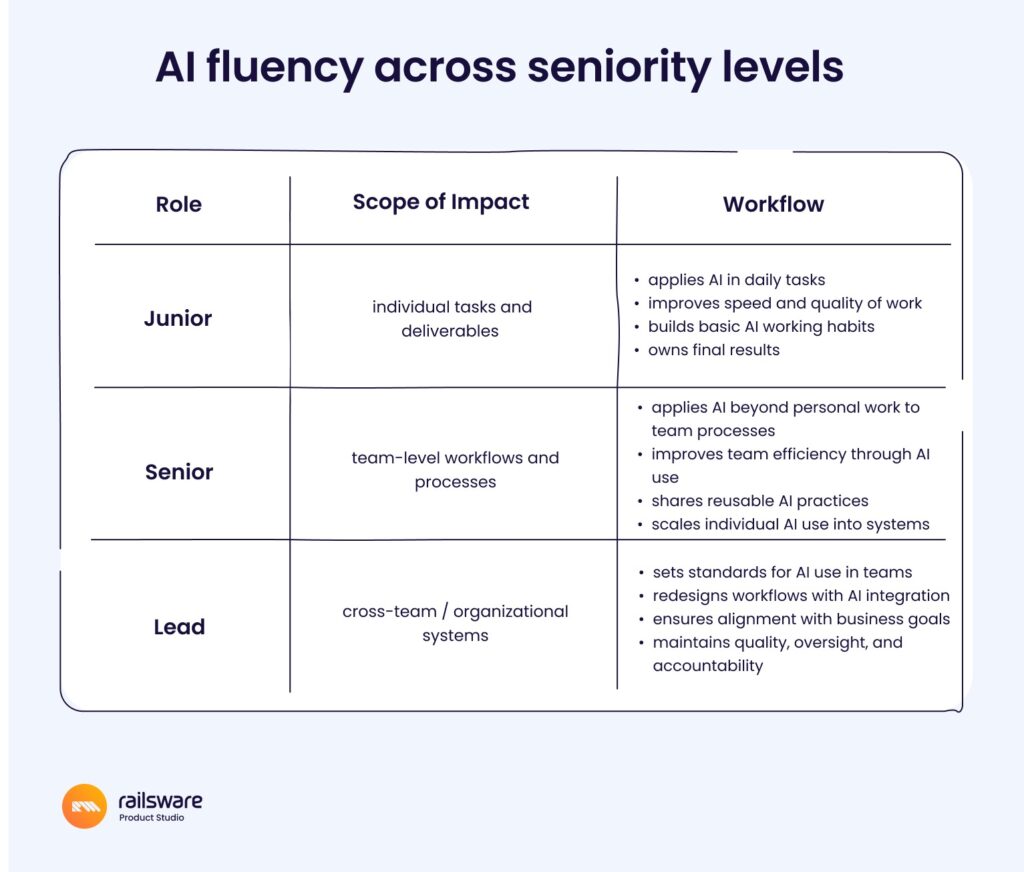

- Expectations scale with seniority. Junior fluency is about personal productivity. Senior fluency shapes team workflows. Lead-level fluency sets standards for how the organization works with AI.

- We assess it in hiring through how candidates think alongside AI, not whether they used it. Fluency shows up in the steering, not the speed.

What is AI fluency?

AI fluency is the ability to use AI tools with intent and judgment in your actual work, not just the ability to operate them. It’s the difference between a person who can prompt ChatGPT and a person who knows when prompting ChatGPT is the wrong move. We treat it as a competency, which means it can be defined, observed, developed, and assessed against a shared standard.

The distinction we hold most tightly is between literacy and fluency.

AI literacy is conceptual: you understand what large language models do, what their limits are, what “training data” means. Useful, but not enough.

AI fluency is operational: you know how to frame a task so an AI can help, what to give it and what to keep, how to read its output critically, and how to take responsibility for what gets shipped. Literacy is what you know. Fluency is what you do with what you know.

Why we made AI fluency a competency, not a tool training

Most companies treat AI as a tool rollout: pick a vendor, write a policy, run a workshop, declare victory. We’ve watched that pattern produce two failure modes. The first is uneven adoption, where a handful of enthusiasts get great leverage and everyone else stays where they were. The second is uncritical adoption, where teams ship AI output without the judgment to catch what’s wrong with it.

A competency model fixes both problems by changing what gets measured. Tool rollouts measure usage. Competency models measure capability. When AI fluency is a line item in your peer review, your promotion criteria, and your job descriptions, it stops being optional and stops being mechanical at the same time. People develop it because it’s part of how they’re evaluated, and they develop it well because the criteria are about judgment, not button-clicking.

The concept of a “competency model” actually started with a problem at the U.S. State Department about 40 years ago. They were struggling to pick the right diplomats, people who could represent a country in complex, unpredictable environments. They realized that traditional checklists of “tasks and skills” weren’t enough.

They brought in Harvard psychologist Dr. David McClelland. Together with his team, they helped to introduce a different approach that focused on the personal characteristics behind high performance.

This leads us to the key difference between job analysis and competency model. The first approach describes what “good performance” looks like. A competency model goes further and looks at what drives exceptional performance.

In that sense, a competency model is a map. It shows where you are, where you can grow, and what you need to develop to move forward in your career. And every organization needs its own version of that map.

This fits how we already think about growth. Our competency model has 36 capabilities organized into three tiers: a horizontal bar of foundations (responsibility, collaboration, focus, curiosity), a leadership tier, and a vertical bar of discipline-specific expertise.

The structure is built around the T-shaped professional, and AI fluency sits in the horizontal bar because it cuts across every discipline. An engineer needs it. So does a recruiter. So does an accountant. (By the way, explore our open roles on the Career Page).

The four patterns of AI fluency

We mapped AI fluency across four observable patterns that shape how people work with probabilistic systems in practice:

- using AI effectively and responsibly to improve work outcomes and decision-making;

- validating outputs with critical judgment;

- remaining accountable for quality and final results;

- using AI with safety and ethics.

Each one is observable, which is what makes the framework usable for review and hiring rather than a slogan.

1. Effective application: using AI to improve outcomes

Effective application is the ability to translate ambiguity into structured input an AI can act on. It covers prompt clarity, but it goes further than that. The real skill is naming the goal, the constraints, the context, and the success criteria before you ask for anything. Vague input produces vague output, and the failure usually traces back to a person who didn’t know what they wanted before they started typing.

A person with strong application skills, tends to write longer first prompts and shorter follow-ups because they front-loaded the context. They reference what they’ve already tried. They name the format they want the answer in. They tell the AI what to ignore. The signal is whether someone treats the prompt as a brief or as a wish.

Take a marketer kicking off a new campaign. The wish version sounds like: “Give me five ad headlines for our new pricing page.” What comes back is generic, because the prompt is generic. The brief version names the goal (drive trial signups from mid-market PMs, not awareness), the audience (product managers at 50–500-person SaaS companies, already familiar with the category), the constraint (e.g., under 60 characters, no superlatives, no exclamation points, no “unlock” or “transform”), the context (here’s the headline that worked last quarter, here are three that didn’t, here’s what changed on the page), and what to ignore (don’t propose new value props; we’re testing copy, not positioning). The follow-ups are short because the first prompt did the work: “tighten #2 and #4” rather than “try again but better.”

2. Critical validation: knowing what to hand to AI and what to keep

This pattern is the judgment of what should go to AI and what shouldn’t. It’s the most underrated of the four because it’s invisible when done well. A person with strong validation skills makes a lot of small decisions every day about which parts of a task benefit from AI speed and which require human reasoning, ethics, taste, or accountability. They don’t outsource decisions that have to be theirs to make.

The wrong approach is “use AI wherever you can.” The right one is “use AI where the cost of being wrong is low and the gain in speed is high, and keep the parts where the opposite is true.” A senior person doing this well will use AI to draft a difficult email and then rewrite half of it because the draft missed the relationship context. They’ll use AI to summarize a long doc and then read the doc themselves before making a decision based on the summary. Such a pattern isn’t the use – it’s the boundary.

3. Ownership & accountability: judging what comes back

This pattern means the ability to evaluate AI output against quality, accuracy, and relevance, and to refine it rather than ship it. It’s the direct counterweight to AI’s most dangerous property: the output looks right more often than it is right. A person with strong ownership and accountability treats every AI response as a draft from a smart but unreliable colleague: they read it carefully, sanity-check the parts that look too clean, and rewrite where it matters.

This is the behavior most often missing in people who score well on the other three. They write good prompts (effective application), pick reasonable tasks (critical validation), and ship safely (ethics awareness), but they accept the output too easily. The tell is in the revision rate. Strong ownership produces a lot of small edits, follow-up prompts that push back, and occasional decisions to throw the AI output away and start over. The weak one produces clean copy that reads fine and is wrong in ways nobody notices for a week.

4. Safety and ethics: using AI responsibly

Safety & ethics is the ability to use AI in ways that respect privacy, security, fairness, and organizational boundaries. It combines technical awareness with professional judgment.

In practice, this includes checking facts before sending, not pasting confidential data into tools that don’t have an enterprise agreement, flagging when AI was used in places where it matters (research, decisions, customer-facing copy), and being able to defend any output as if you wrote it yourself. Because in every way that counts, you did.

How AI fluency scales with seniority

AI fluency expectations scale with role level the same way any other competency does. The four positive behavioral patterns stay constant. What changes is the scope of what each one applies to.

A Junior with strong AI fluency uses it to do their own work better. Their application helps them work faster and with more clarity. Their validation catches mistakes and weak reasoning in their own outputs. Their accountability ensures they take ownership of the final result instead of relying blindly on generated content. Their safety & ethics awareness helps them use AI responsibly within established boundaries. The unit of impact is primarily themselves.

A Senior with strong AI fluency uses it to shape how their team works. Their application extends beyond personal productivity into improving team workflows and decision-making through templates and prompts. Their validation calls help establish what to hand to AI and what to keep. Their accountability sets the bar that pull requests, briefs, or reports get reviewed against. Their safety & ethics awareness shows up in the workflows they design, not just their own behavior. The unit of impact is the team.

A Lead with strong AI fluency uses it to set standards across the organization. Their application influences how AI gets briefed at scale. Their validation shapes policy: what categories of work are AI-appropriate, what aren’t, and where the lines move. Their accountability shows up in evaluation criteria, review processes, and the bar for “good.” Their safety & ethics awareness shapes the guardrails everyone else operates inside. The unit of impact is the company.

Every role at Railsware benefits from AI fluency, but the application varies. An engineer using AI to scaffold an MVP looks different from a recruiter using AI to triage candidate pipelines or an accountant using AI to spot anomalies in a ledger. What stays constant across all of them is the four patterns. The behaviors are universal; the use cases aren’t.

How we assess AI fluency in hiring

We assess AI fluency in hiring by watching how candidates think alongside AI on practical tasks, not by asking whether they use it. The use is assumed. What we’re looking for is the steering: the four positive behavioral patterns observed in real work.

In the initial screen, we’re looking for curiosity, not credentials. Nobody is expected to recite frameworks or name every model. What stands out is evidence of having tried things, hit limits, and figured out workarounds. A candidate who can describe a moment they decided AI was the wrong tool for a job tells us more than one who lists ten tools they’ve used.

On practical tasks, AI is welcome and expected. We pay attention to four signals that map directly to the framework:

- Effective application. How does the candidate frame the prompt? Do they front-load context and constraints, or do they treat the AI as a search box?

- Critical validation. Where do they choose to use AI in the task, and where do they choose not to? The choice itself is the signal.

- Owenship & accountability. Do they take the first output, or push back on it? How do they decide when something AI produced is good enough?

- Safety & ethics awareness. Do they own what they deliver, regardless of how it was produced? Can they defend any line in it?

A strong candidate can usually explain when AI is the wrong tool, refines AI output rather than shipping it raw, adjusts approach based on the goal rather than copying patterns, and takes responsibility for the result. None of those are about AI specifically. They’re about being a good professional who happens to work with AI.

How to start building this in your own team

If you’re considering something similar, three short steps cover most of what we did.

- Define fluency as behaviors, not tools. Pick four to six observable behaviors that describe what good AI use looks like in your context. The four patterns are what worked for us; your version may differ. The key move is making the criteria about judgment, not tooling.

- Put it in the documents that matter. Job descriptions, peer reviews, promotion criteria. If AI fluency isn’t on the page where decisions get made, it stays optional.

- Calibrate expectations by level. What looks like fluency for a junior is not what looks like fluency for a lead. Write the levels out so people can see where they are and where they’re going.

The work isn’t in the framework, it’s in the consistency. A competency only becomes useful when peers, managers, and interviewers all use it the same way. Plan for the calibration sessions – that’s where the value grows.

FAQs

What is the difference between AI literacy and AI fluency?

AI literacy is conceptual understanding of what AI is, how it works, and what its limits are. AI fluency is operational: the ability to use AI tools with judgment in your actual work, knowing when they help, when they don’t, and what risks they introduce. Literacy is what you know. Fluency is what you do with it.

What are the positive patterns of AI fluency?

The four behavioral patterns are effective application (how clearly you frame the task), critical validation (what you choose to hand to AI vs. keep), ownership & accountability (how well you evaluate and refine the output), and safety & ethics awareness (how responsibly you use AI and own the result). Together they describe the four moments of working with a probabilistic tool.

How do you measure AI fluency objectively?

By defining behavioral indicators rather than asking “Are you good with AI.” At Railsware, we observe how someone structures prompts (application), decides what to delegate and what not (validation), evaluates and refines output (ownership & accountability), and handles security and accountability (safety awareness). These behaviors are observable in peer reviews, project work, and hiring tasks.

How does AI fluency change with seniority?

Expectations scale with role. At a Junior level, AI fluency is about individual productivity. At a Senior level, it’s about shaping team workflows and reusable practices. At a Lead level, it’s about setting organization-wide standards and guardrails. The four positive patterns stay constant; the scope changes.

Do all roles need AI fluency?

Yes, but the application varies. An engineer’s use of AI looks different from a recruiter’s or an accountant’s. What’s constant across roles is the underlying behaviors: framing tasks well, choosing what to delegate, judging the output, and owning the result.

How do you assess AI fluency in hiring?

By watching candidates think alongside AI on practical tasks, not by asking whether they use it. The signal isn’t speed or polish, it’s how they steer the AI: how they frame the prompt, what they choose to delegate, how they push back on the output, and how they take responsibility for what they deliver.

Where this fits

Building products with AI requires more than tool access. It requires teams who know when to use AI, when to override it, and how to take responsibility for the result. That’s the engineering mindset behind every product we ship.

If you’re building an MVP and want a team that pairs AI-assisted speed with senior judgment, talk to our AI-driven MVP team.